../

Score of a probability function

If you a probability density function $f$, the score is the derivative of the logarithm (natural log in many cases) with respect to some parameters $\theta$

$l(x; \theta) = \frac{\partial}{\partial \theta} \ln p(x; \theta)$

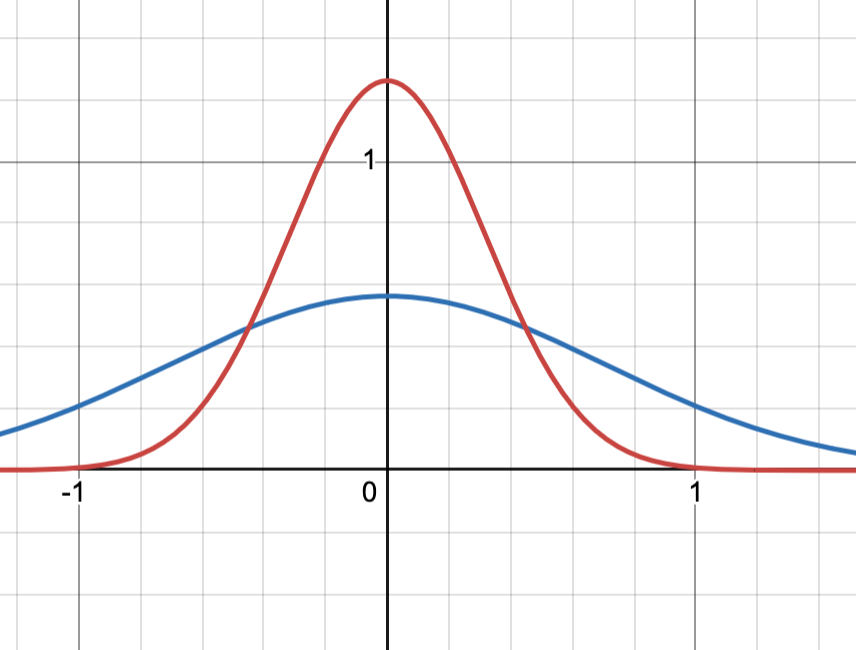

Below is the a Gaussian with $mu=0$ and $\sigma^2 = 0.1$(red) and $\sigma^2=0.5$

Log likelihood is often used in ML to convert the learning problem into an optimization one.

But in the context of Fisher Information, having the score sort of fattens up the edges of the Gaussian. But more importantly during the derivation it allows for a neat cancellation.